The global business landscape in 2026 has reached a definitive inflection point where spatial computing has transitioned from a specialized novelty to a requisite component of the modern enterprise technology stack. The proliferation of virtual reality (VR). mixed reality (MR), augmented reality (AR), and smart eyewear has fundamentally altered the methodologies of high-stakes training, remote collaboration, and complex data visualization. This post provides an exhaustive technical analysis and strategic roadmap for nine pivotal devices currently dominating the professional market including some new devices to the market.

These systems represent a spectrum of capabilities, ranging from high-compute headsets designed for surgical precision to ambient AI glasses engineered for all-day heads-up productivity. Devices like these are being used for marketing experiences to training applications. Since the beginning of the modern day revolution in 2014, Groove Jones was there on day one. We have run thousands of people through these types of programs. As organizations increasingly integrate these tools into new initiatives, the differentiation between platforms is increasingly defined by the maturity of their operating systems, the fidelity of their environmental mapping, and the scalability of their deployment frameworks. The space continues to evolve and you need a trusted advisor to help you along the way. Here is our take on some of the latest options for 2026.

The High-Fidelity Frontier: Spatial Computing at the Premium Tier

In the most demanding professional environments, the focus remains on “human-eye” resolution and near-zero latency. These devices are intended for the “spatial pro”—individuals whose work requires the highest degree of visual accuracy and the ability to process massive 3D datasets without a tethered connection.

Apple Vision Pro: The Benchmark for Precision and Workflow Integration

The 2026 iteration of the Apple Vision Pro remains the industry’s high-end benchmark for visual fidelity and ecosystem cohesion. Building upon the foundational success of the initial 2024 launch, the 2026 model integrates the Apple M5 chip, a 10-core processor designed to handle significantly heavier computing loads in mixed reality than its M2-based predecessor. This architectural leap provides the computational headroom required for complex 3D environments and intensive multitasking, allowing users to summon a Mac Virtual Display that functions as a high-resolution, floating workstation within a physical environment. The device maintains its dual Micro-OLED display system, packing 23 million pixels across two panels, resulting in a perceived resolution that exceeds 4K per eye. A critical enhancement in this generation is the support for refresh rates up to 120Hz, up from the original 100Hz, which significantly reduces motion blur and improves the fluidity of interactions in high-performance simulations.

The sensory stack of the Apple Vision Pro is unmatched in the consumer-professional market, utilizing 12 cameras, five sensors, and six microphones to provide an intuitive interface driven entirely by eyes, hands, and voice.

The R1 co-processor is dedicated to processing these sensor inputs with a photon-to-photon latency of just 12 milliseconds, a benchmark that ensures the virtual overlays remain perfectly anchored to the real world even during rapid head movements. This low latency is essential for professional XR workflows, where any misalignment between digital instructions and physical machinery could lead to operational errors. For security, the device utilizes Optic ID, an iris-based biometric system that encrypts data within the Secure Enclave, ensuring that sensitive corporate information remains protected during remote collaboration sessions.

In practical business applications, the Apple Vision Pro (2026) is increasingly utilized for real-time remote assistance in specialized fields such as aerospace and advanced manufacturing. In healthcare, the headset facilitates telehealth, surgical preparation, and immersive training simulations that allow clinicians to practice high-risk procedures in a safe, virtual environment. Architects and engineers leverage the device’s spatial audio and high-resolution 3D main camera system to conduct virtual property tours and collaborate on interactive architectural planning in real-time. The 1TB storage variant is specifically targeted at creative professionals and engineers working with large local assets, such as high-resolution video recordings or massive 3D CAD files.

Samsung Galaxy XR: The Flagship for Open Ecosystem Integration

The Samsung Galaxy XR, released in late 2025, represents the definitive entry of the Android ecosystem into the high-end spatial computing market. Co-developed with Google and Qualcomm, the device is the premier hardware platform for Android XR, an operating system designed to extend the existing Android management framework into immersive environments. The Galaxy XR is powered by the Qualcomm Snapdragon XR2+ Gen 2 chipset and 16GB of RAM, providing a performance profile that rivals dedicated spatial computers. The hardware features dual Micro-OLED displays with a staggering total resolution of 27 megapixels (3552 X 3840 per eye), supporting a peak refresh rate of 90Hz.This high pixel density is optimized for reading text and viewing complex data sets, addressing a common pain point in earlier generations of enterprise headsets.

The physical design of the Galaxy XR emphasizes comfort for long-duration use, featuring an external 302g battery pack connected via a cable to reduce the weight on the wearer’s face. The head-mounted unit itself weighs 545g and includes an ergonomic forehead cushion and a rear adjustment dial to ensure a stable fit during active movement. Input is managed through a comprehensive sensor array including six world-facing cameras for 6DoF inside-out tracking, four internal cameras for eye tracking, and a depth sensor that maps the surrounding environment to create realistic interactions between virtual objects and physical surfaces.

From a business perspective, the Galaxy XR’s greatest strength is its support for Android Enterprise, allowing IT departments to manage the headsets using the same tools they use for a fleet of smartphones—including remote wipe, password policies, and zero-touch enrollment. In retail, merchandising teams use the “Auto Spatialization” feature in Google Chrome and YouTube to convert 2D store plans into immersive 3D walkthroughs, allowing them to evaluate product placement and customer flow before fixtures arrive on-site. In manufacturing and utilities, the device provides hands-free continuity for technicians, who can access “context-aware” guidance through Google Gemini AI to solve problems in real-time without taking their hands off the equipment. The device’s integration with the Galaxy Connected Experience allows for seamless file sharing and the ability to answer calls directly within the XR environment, maintaining a unified workflow across mobile and spatial platforms.

Scaling Mixed Reality: The Workhorse Tier

While high-end devices target the “spatial pro,” the majority of enterprise deployments focus on scalability, training, and operational efficiency. Meta and Pico dominate this segment with hardware that balances cost-to-performance for large-scale “fleet” deployments.

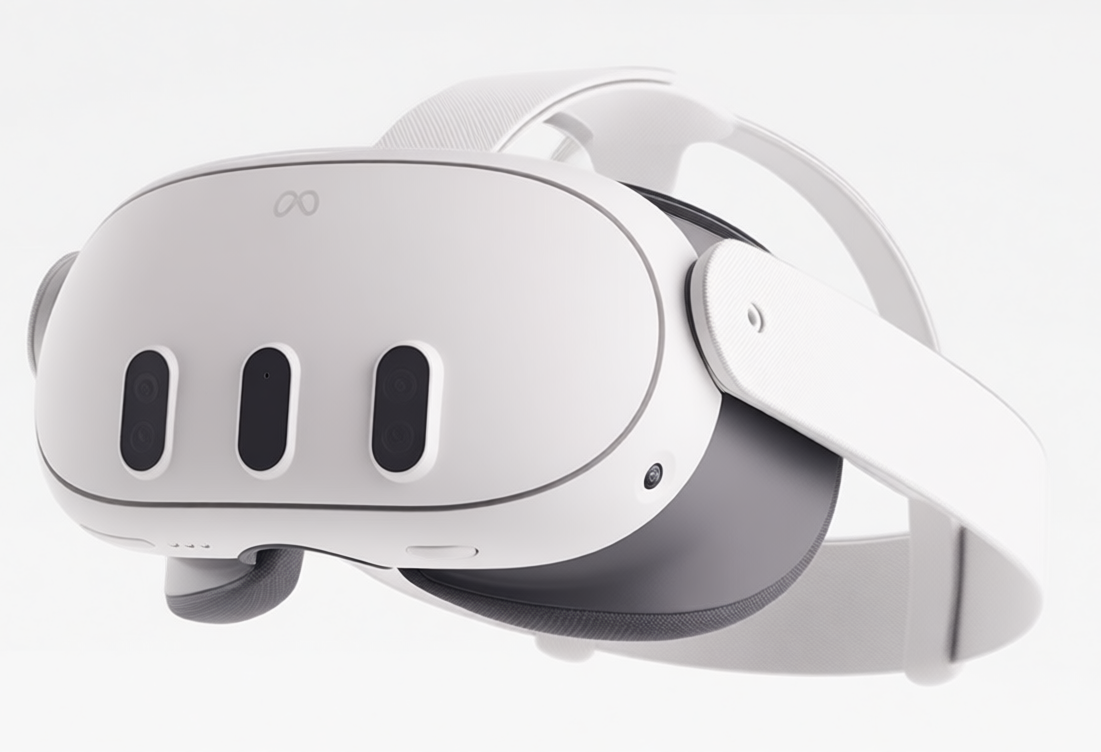

Meta Quest 3: The Universal Standard for Enterprise Mixed Reality

The Meta Quest 3 is widely regarded as the most balanced mixed reality headset for enterprise use in 2026, offering a combination of high-fidelity passthrough, a mature developer ecosystem, and competitive pricing at $499. Powered by the Snapdragon XR2 Gen 2 processor, the Quest 3 delivers double the GPU performance of the previous generation, which is essential for rendering complex training simulations and architectural visualizations without the need for a tethered PC. The headset utilizes advanced “pancake” lenses that provide superior edge-to-edge clarity and a 110-degree horizontal field of view, allowing for peripheral awareness that is critical in safety-critical training environments.

Recent Deployments include work with the U.S. Army National Guard, Angelo State University, and Svante.

The Quest 3 distinguishes itself through its high-fidelity color passthrough and integrated depth sensor, which maps physical rooms with high accuracy. This enables “true” mixed reality immersion where virtual objects remain perfectly occluded by physical furniture, a feature that is transformative for retail planning and interior design. Furthermore, the Quest 3 is supported by a large ecosystem of enterprise tools, including Meta Horizon Managed Solutions, which provide a dedicated framework for fleet management, though this often requires a subscription for full professional functionality.

The practical business uses for the Meta Quest 3 center on its price-to-performance ratio for mid-scale deployments. Organizations utilize the device for interactive showcases and corporate events where a quick setup and intuitive user interface are paramount. The headset’s integration with Microsoft Mesh and other productivity tools makes it a natural choice for virtual meetings and collaborative design reviews. In vocational training, the Quest 3’s hand-tracking capabilities allow trainees to practice manual tasks—such as engine repair or medical procedures—without the need for clunky controllers, improving the transfer of skills to the real world.

Meta Quest 3s: Democratic Spatial Computing for Large-Scale Fleets

The Meta Quest 3s was introduced as an entry-level variant intended to replace the Quest 2 at a $299 price point, making it the most cost-effective solution for budget-conscious fleet deployments. Despite its lower price, it shares the same Snapdragon XR2 Gen 2 processor as the Quest 3, ensuring that software performance and AI capabilities remain consistent across an organization’s hardware tiers. To achieve this affordability, Meta utilized the Fresnel lens system and the (1832 x 1920) resolution per eye from the Quest 2, resulting in a headset that is thicker and has a slightly more “grainy” image than the standard Quest 3.

However, the Quest 3s retains the high-quality color passthrough cameras of the Quest 3, allowing it to perform mixed reality tasks that were previously impossible at this price bracket. It lacks the dedicated depth sensor of the more expensive model, relying instead on computer vision for environmental mapping, which is sufficient for most general-purpose training and productivity applications.

For business, the Meta Quest 3s is the ideal choice for massive rollouts in education and corporate training where thousands of units are required for a distributed workforce. It serves as a gateway to the Meta ecosystem, allowing companies to deploy the same training modules to entry-level employees on the 3s as they do to management on the Quest 3 or Quest Pro. The inclusion of a hardware “action button” for toggling the passthrough cameras makes it more accessible for first-time users in professional settings who may need to quickly switch between the virtual and physical world.

Pico 4 Ultra Enterprise: The Ergonomic Powerhouse for Multi-Window Productivity

The Pico 4 Ultra Enterprise, developed by ByteDance, is specifically engineered to address the needs of businesses requiring high-performance mixed reality with a focus on all-day comfort. Powered by the Snapdragon XR2 Gen 2 platform, it features 12GB of RAM—50% more than the Meta Quest 3—which allows for significantly better multitasking. The PICO OS supports a “360° screen” workspace where multiple expandable windows can be opened simultaneously, each resizable up to 280 inches, making it a compelling alternative to physical multi-monitor setups.

A standout feature of the Pico 4 Ultra Enterprise is its ergonomic “balanced” design, where the battery is mounted on the back of the head strap to offset the weight of the optics. This weight distribution, combined with a lightweight 580g total mass and high-resolution dual 32MP color passthrough cameras, makes it one of the most comfortable headsets for sustained professional use. Additionally, it supports Wi-Fi 7, which reduces wireless streaming latency to approximately 5 milliseconds, enabling fluid PC-VR simulations in industries that require the graphical power of external workstations.

Practical business applications for the Pico 4 Ultra Enterprise are found in large-scale deployments where device management is centralized. Through the PICO Business Device Manager, IT administrators can remotely deploy content and monitor the status of all devices across their infrastructure. The device is particularly effective in collaborative “Play Space Sharing” environments for location-based training, where multiple trainees must interact within the same physical and virtual space. The inclusion of a detachable PU face foam cushion ensures that the headset can be easily cleaned after each session, a critical requirement for high-throughput corporate training labs.

Pico 4 Enterprise: Specialized Data Collection and Research

While the Ultra model focuses on raw compute and MR, the standard Pico 4 Enterprise remains a vital tool for organizations that require specialized internal sensors. It is one of the few mainstream enterprise headsets to feature integrated eye tracking and face tracking as standard, utilizing three additional internal cameras to capture subtle facial expressions and pupil movements. This makes it an invaluable asset for behavioral research, allowing companies to collect data on user engagement and gaze direction during product testing or psychological studies.

The Pico 4 Enterprise shares the same 4K+ display resolution (2160 x 2160 per eye) and pancake optics as its successor, ensuring high visual clarity for professional interactions. It runs on the older Snapdragon XR2 chipset, but for many business applications—such as virtual meetings and collaborative design—the processing power remains more than adequate.

The business uses for the Pico 4 Enterprise are focused on high-fidelity avatars and data-driven insights. In tourism and education, the device is used to create realistic virtual tours where the guide’s avatar reflects their real-time expressions, improving the sense of presence and connection for participants. The PICO Business Suite allows for “kiosk mode,” locking the device into a specific application, which is ideal for public-facing demonstrations at conferences or in retail showrooms. Furthermore, the easy-to-clean PU leather face cover makes it a more hygienic option for workplace environments compared to standard consumer models.

The Ambient AI Revolution: Smart Glasses for the Modern Workforce

In 2026, the market has seen a surge in “all-day” wearables that prioritize social acceptability and lightweight form factors. These devices function as ambient assistants, bridging the gap between digital data and physical action without the bulk of a traditional headset.

Meta Ray-Ban Display: The Frontier of Heads-Up Productivity

The Meta Ray-Ban Display is perhaps the most socially significant wearable of 2026, successfully merging iconic Ray-Ban styling with advanced ambient AI. Unlike earlier smart glasses that relied solely on audio, the “Display” model features a full-color monocular waveguide projection system integrated into the right lens. This (600 x 600) pixel display is capable of showing notifications, images, video previews, and real-time visual responses from Meta AI. A standout technical innovation is the Meta Neural Band, which uses Electromyography (EMG) to interpret muscle signals in the wrist, allowing the wearer to navigate the glasses’ interface through subtle, silent hand gestures.

The glasses are equipped with a 12MP camera and a five-microphone array, enabling high-quality 3K Ultra HD video capture and clear two-way voice communication. The integration of multimodal AI allows the glasses to “see” what the user sees, providing real-time translations of foreign text or step-by-step visual guidance for complex tasks like equipment maintenance or recipes.

In the business sector, the Meta Ray-Ban Display serves as a powerful tool for executive communication and field operations. The new “teleprompter” feature allows presenters to view their notes directly on the lens, facilitating natural eye contact during speeches or recorded content creation. For international business travelers, the glasses provide near-instantaneous translation and live captions, displaying the speaker’s words as text in the user’s field of vision. The “Neural Handwriting” feature is particularly revolutionary for discrete communication; it allows a user to trace letters on any surface with their finger, with the Meta Neural Band transcribing the movements into text messages while the user’s phone remains in their pocket. This allows for a level of heads-up focus that was previously unattainable in professional settings.

Xreal One Pro: The Premium Solution for Mobile Multi-Monitor Workflows

The Xreal One Pro is the flagship of the AR glasses category, designed for professionals who require a high-quality, stable virtual screen on the go. It is the first AR glass to feature a self-developed “X1” spatial computing chip built directly into the frames, which reduces motion-to-photon latency to a market-leading 3 milliseconds. This near-instant response time is critical for “Anchor Mode,” which allows a virtual screen to remain perfectly still in physical space, eliminating the motion sickness often associated with software-based stabilization.

The One Pro utilizes a new “X Prism” optical engine and the latest generation 0.55-inch Sony Micro-OLED displays to achieve a 57-degree field of view—the widest currently available in mainstream AR glasses. This expanded field of view enables a virtual screen equivalent to 171 inches from four meters away, providing ample room for professional multitasking without the “clipping” at the edges found in smaller-FOV glasses.

The practical business applications for the Xreal One Pro center on “anywhere” productivity. Professionals who frequently work from planes or trains use the One Pro to create a private, expansive virtual workspace from a single laptop, maintaining data privacy while significantly increasing their available screen real estate. When paired with the “Xreal Eye” accessory, the glasses support 6DoF anchoring, allowing users to place multiple virtual windows in a room exactly like a high-end VR headset but in a form factor that weighs less than 90 grams. The three-tier electrochromic dimming allows the user to switch between “Clear Mode” for situational awareness and “Theater Mode” (100% dark) for deep focus work.

Strategic Outlook and Conclusion

For businesses navigating the spatial computing landscape in 2026, the choice of hardware must be dictated by the specific “radius of activity.” For high-stakes precision work within a controlled environment, the Apple Vision Pro and Samsung Galaxy XR provide the necessary fidelity and compute. For organizations scaling training to thousands of employees, the Meta Quest 3 and Quest 3s offer the best balance of ecosystem maturity and cost. For the mobile professional, the Xreal One Pro and Meta Ray-Ban Display represent the future of heads-up productivity, allowing for a seamless blend of digital and physical workflows.

Ultimately, the success of an enterprise XR strategy depends less on the individual headset and more on the integration of that hardware into the company’s broader digital transformation. As spatial operating systems like visionOS and Android XR continue to evolve, and as silicon like the M5 and Snapdragon XR2 Gen 2 push the boundaries of what is possible on-device, the boundary between “digital work” and “physical work” will continue to dissolve. The devices analyzed in this report are the cornerstones of this new reality, providing a platform for the next decade of industrial and corporate innovation.